Do You Know What Secrets Your SQL Servers Are Sharing?

Here's how to check the current state of connections to the production SQL Server, what to look for and why.

It’s a scary world out there for a DBA. You have to be constantly on guard for a Rogue’s Gallery of security threats, including the threat that authenticated users can pose. I may sound paranoid and pessimistic, but I choose to consider myself pragmatic. Database consumers have a propensity for accessing databases by any means necessary--or, rather, by any means that the processes and architectures created by others have enabled. Unintended access can also be intentional. For example, a developer or DBA who doesn’t have rights to connect to the production SQL Server using his or her network login could “backdoor” through SQL Server Management Studio through a SQL login and password. You also have to consider issues related to misconfiguration of the SQL Server environment by yourself and your fellow DBAs.

I recently found myself at an engagement where we were encountering connection issues. I decided to open my toolbox to get a snapshot of the current state of connections to the production SQL Server, and thought it wise to share the insights I was able to gain from this script. Here are some recommendations on what to look for and why.

Opening the SQL Server Toolbox

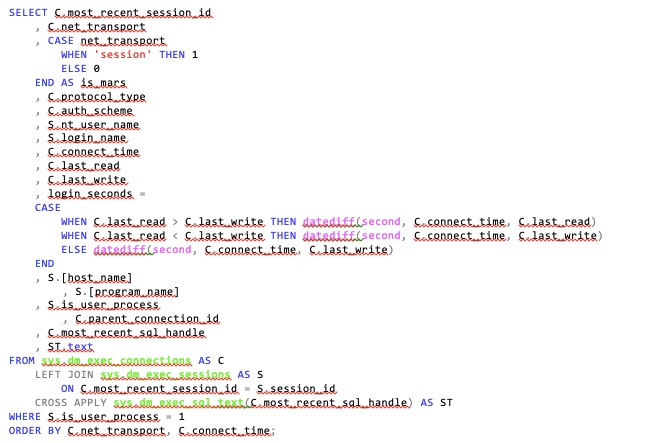

The code for gathering insights into your SQL Server connections configuration and activity is quite simple considering the information it returns. The core SQL Server objects for this diagnostic query are three Dynamic Management Objects (a.k.a. DMOs):

dm_exec_connections – Provides data around external connections to SQL Server.

dm_exec_sessions – This view offers up metadata around what those connections are doing once they’ve authenticated to the SQL Server instance.

dm_exec_sql_text – This function expects a sql_handle parameter. A sql_handle is a unique identifier for a batch of Transact-SQL code for a given SQL Server instance. The function returns the t-sql code from the sql_handle that is supplied.

There are a couple of general concepts around connections and sessions to consider as you look at the query and the associated results:

Sessions are uniquely identified by their session_id.

Connections can be re-used. As a result, the unique identifier for a session on a given connection is not session_id, but, rather, most_recent_session_id.

Since connections can be re-used, the same concept holds true for the sql_handle identifier mentioned previously. The last session’s sql_handle is stored in dm_exec_connections within the most_recent_sql_handle.

Connections can be nested if Multiple Active Results Sets (a.k.a. MARS) are being used. (I'll talk more about MARS later in the article.)

Considering these base rules should allow you to make sense of the script below, even if you’re seeing Dynamic Management Objects for the first time:

SELECT C.most_recent_session_id

, C.net_transport

, CASE net_transport

WHEN 'session' THEN 1

ELSE 0

END AS is_mars

, C.protocol_type

, C.auth_scheme

, S.nt_user_name

, S.login_name

, C.connect_time

, C.last_read

, C.last_write

, login_seconds =

CASE

WHEN C.last_read > C.last_write THEN datediff(second, C.connect_time, C.last_read)

WHEN C.last_read < C.last_write THEN datediff(second, C.connect_time, C.last_write)

ELSE datediff(second, C.connect_time, C.last_write)

END

, S.[host_name]

, S.[program_name]

, S.is_user_process

, C.parent_connection_id

, C.most_recent_sql_handle

, ST.text

FROM sys.dm_exec_connections AS C

LEFT JOIN sys.dm_exec_sessions AS S

ON C.most_recent_session_id = S.session_id

CROSS APPLY sys.dm_exec_sql_text(C.most_recent_sql_handle) AS ST

WHERE S.is_user_process = 1

ORDER BY C.net_transport, C.connect_time;

What may throw you is the LEFT JOIN from dm_exec_connections to dm_exec_sessions. We are just as interested in connections without any corresponding activity as we are with those running queries. I want to have good connection channels and cleanup on my SQL Servers. Being able to analyze connection health means that all connections are in scope. Also note the use of the most_recent_session_id and most_recent_sql_handle columns mentioned previously.

I’m sorting by net_transport mainly to classify the connection types in this article. If this were not strictly an educational exercise, I’d stick to returning results based on oldest connections first. The original purpose for this query was to look for poor connection cleanup, and those offenders would likely rise to the top--with some exceptions, as you’ll see in the results below:

Microsoft SQL Server Connections_0

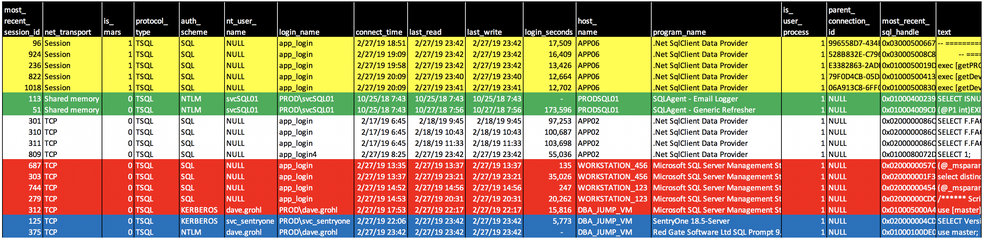

I’ve pulled the results into Microsoft Excel to do some formatting; this allows me to address each area separately for what I’m most-interested in identifying in each grouping:

Multiple Active Results Sets (MARS) - Yellow

I’m able to determine that a connection is making use of MARS based on two identifying traits. The first is the one I relied on in the script code: net_transport returns a value of “Session” for any MARS-enabled connection, regardless of the true network protocol used. The second identifier is that parent_connection_id is populated. I briefly mentioned MARS at the start of the article. The concept is quite deep, and the practice of using MARS is not widely adopted, so I won’t dwell on the details. When MARS is configured (in the connection string for the application), it allows for executing multiple batches of T-SQL on a single connection. This leads to multiple sessions spawning from a single connection and allows for applications to interleave reading between the sessions. MARS has its pros and cons: Since it allows for interleaving read-based actions such as those from SELECT, FETCH and RECEIVE, statements can save overhead expense of opening/closing/opening connections. This is good. The negatives outweigh the benefits, though. MARS is an edge topic that most DBAs are unfamiliar with. Interleaving is not compatible with INSERT, UPDATE and DELETE statements, and when those types of statements are submitted, they’ll pause any reads so they can complete in their entirety. This causes a major latency hit. Finally, stored procedures, functions and triggers are not interleave-friendly. Your application code should also be configured with MARS in mind if you plan on using MARS.

Connecting Locally from the SQL Server – Green

Remember those horror movies where the babysitter is alone in a dark house, the phone rings, and the caller is INSIDE THE HOUSE! That’s what we see here. I’m able to identify the connections as local through the value of "Shared Memory” for the net_transport column. Shared memory’s boundaries are the server walls themselves. If you enable shared memory protocol only on your SQL Server, it effectively blocks external connections.

Typically, local connections to SQL Server are signs that the babysitter is about to take one for the team (in the figurative sense.) Particularly when you see that the program_name value from dm_exec_sessions is “Microsoft SQL Server Management Studio.” That can mean one of a two things:

Someone is remoting into a SQL Server, launching Management Studio, and executing queries along with draining CPU and RAM from the pool of available resources through the simple act of initiating a remote session and running Management Studio.

An internal process, such as SQL Agent Jobs, is being witnessed.

As is the case here, these are the former, benign connections triggered from within SQL Agent. The program name, and login name being the SQL Agent service account, gives this away.

Nothing to See Here… or is There? – White

There are no surprises here, but there are concerns the DBA should consider:

SQL Server Authentication vs. Trusted/AD Authentication is less secure. I can tell that SQL security is in play due to the values for auth_scheme, nt_user_name and login_name columns.

Poor connection cleanup: I’d question why connections are being held open for days at a time.

Management Studio – Red

Every tool has a purpose, and every tool can be misused. I’m not concerned with SQL Server Management Studio use here for our good buddy Dave Grohl. We all know Dave can do no wrong. Plus he’s a DBA. What does concern me, though, are the other connections into Management Studio using the application’s SQL login. If I saw that in the real world, you can bet that I’d find out who uses those workstations and provide some education. Why?

We have individuals using an application login that can’t be tied to an individual. This is carte blanche ability to do intentional and unintentional harm without attribution.

It’s not uncommon to find application logins with more rights afforded to the login than what is necessary. Often, the application is used as a barrier to access when rights are lazily granted. For example, when a login is granted db_owner permissions on the database, it allows whomever connects to the database to do just about anything to the database--including dropping it. If the application doesn’t provide a channel that would enable nefarious, like the dropping of a database, to happen, a lazy DBA might feel that security is “good enough.” It’s not. If a user has access to Management Studio and the login and password for the application login, then the user can bypass any application-centric hurdles and have full reign over the database. Connecting to Management Studio with an application login is one of the biggest red flags I see when looking at this data.

Application and Plug-In Connections – Blue

When I look at program_name, login_name and host_name I can identify the purpose of these connections. What I want to look for here is the logins associated with a particular application, to make sure it’s being used properly. In the case of the last connection, you may raise a concern about Dave’s login being used for the Redgate tool--until you understand that it runs in the context of the person who is using Management Studio where it resides as a plug-in.

Conclusion

As you can see, a simple query can provide a vast pool of information as to who is connecting to your SQL Servers and how. Furthermore, you can see what these connections are executin and how long the connections are left open, as well as identify a host of misuse on the server. Now, if you’ll excuse me, I need to look into who is using Management Studio with that application login!

About the Author(s)

You May Also Like

.jpg?width=700&auto=webp&quality=80&disable=upscale)

_(1).png?width=700&auto=webp&quality=80&disable=upscale)

.png?width=700&auto=webp&quality=80&disable=upscale)