How Can You Trust ChatGPT? AI TRiSM

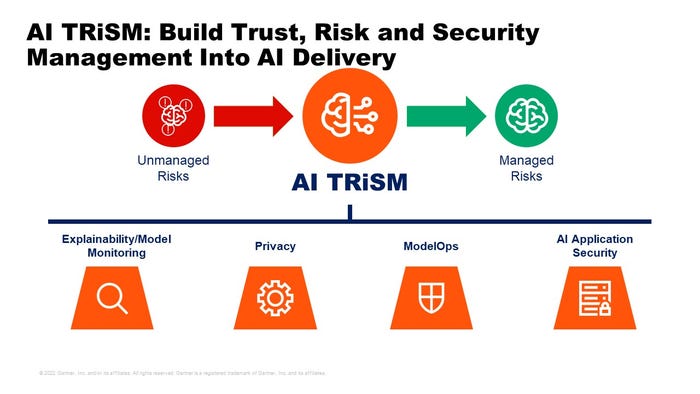

AI Trust Risk and Security Management (AI TRiSM) is possible by applying cross-disciplined practices, methodologies, and tools to AI models.

January 24, 2023

AI Trust Risk and Security Management (AI TRiSM) is possible by applying cross-disciplined practices and methodologies to AI models and supporting data, and adopting tool sets that support these practices.

We just published an update to our AI TRiSM Market Guide Market Guide for AI Trust, Risk and Security Management that lays out a framework for managing model trust, risk and security, and lists sample vendors in the niche software categories that support that framework.

AITRiSMarchitecturefinalforSYMP

AI TRiSM methods and tools work with any model, ranging from open-source LLM models like ChatGPT or homegrown enterprise models that use a variety of AI techniques. Of course, with open-source models, there are some differences – for example with regards to protecting enterprise training data on shared infrastructure used to update the model for enterprise use cases.

Additionally, enterprises have no discrete ability to govern open- source models using ModelOps tools we write about in the Market Guide.

But explainability, model monitoring, and AI application security tools can all be used on any open source or proprietary model to achieve the trustworthiness and reliability enterprise users need. In fact, they should be used on third party models and products that embed AI to keep solution providers honest and ensure products perform as advertised.

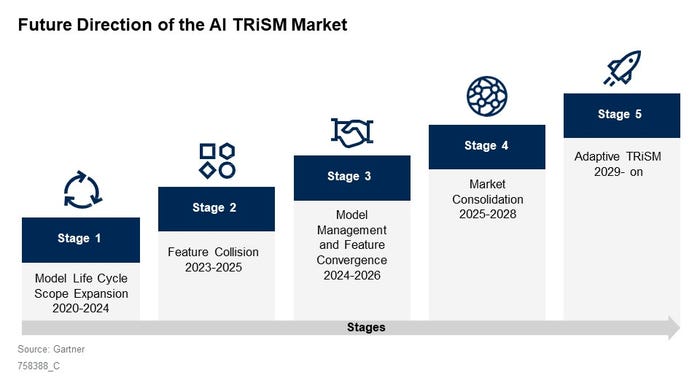

The AI TRiSM market is still new and fragmented, and most enterprises don’t apply TRiSM methodologies and tools until models are deployed. That’s shortsighted because building trustworthiness into models from the outset – during the design and development phase – will lead to better model performance.

As AI models proliferate, we expect AI TRiSM methods and tools will be more commonly adopted by teams across the enterprise involved in AI. Here’s the roadmap we see for this market.

AITRiSMMarketDirection_0

This article originally appeared on the Gartner Blog Network.

Read more about:

AIOpsAbout the Author(s)

You May Also Like

.jpg?width=700&auto=webp&quality=80&disable=upscale)

_(1).png?width=700&auto=webp&quality=80&disable=upscale)

.png?width=700&auto=webp&quality=80&disable=upscale)